The AI Plateau

Everyone seemed to get v moist about AI last year, with all and sundry jumping on the bandwagon as that is the place to be. Feels all dotcom boom if you ask me. And let me give you three thoughts as to why this plateau of capability may just be as good as it gets for a while yet.

Curiosity

Not met an AI yet that is deeply curious. Cats have more curiosity, of course they need nine lives to cope with that level of curiosity, but what is intelligence without curiosity? If the things were curious maybe it would self check to see if it has just made a bunch of stuff up and not give it to the user…maybe a, er, don’t know, let me get back to you would actually be a better answer? Fundamentally this goes to the heart of how they are structured right now. Its ping pong intelligence. You ask a question, it comes back with something, you ask another question, all of course wrapped up in a magic term of prompt engineering. Its not engineering its discourse you clowns. Until an AI becomes curious then I’m dubious on its value.

Longevity

This is a bleed from curiosity. Curiosity isn’t just a moment in time. It is a life time, if you want, of curiosity. It’s reading an article and going, interesting, buying two books that have been out of publication for decades and reding them with fascination and thought. And then using that random knowledge in weird ways that you had no idea you could. But the key is you have to have curiosity to go oh, and then longevity to keep at the research and learning. Now an AI could do that so well, and so quickly, and you could argue it already has due to its training, but has it though?

We are seeing the model are trained on what can politely be termed a rather shallow pond of knowledge. There is nothing in there that isn’t digital. There is nothing in there that is even remotely controversial. There is nothing in there (well barring the court cases so we shall see if this stands the test of time) that is paid and expensive to get hold of. So are we seeing a result of ‘longevity’ of learning or as I suspect not? It definitely is not one where it gets stumped and will then go learn new things. Again I see AI of dubious value in many use cases.

Culture

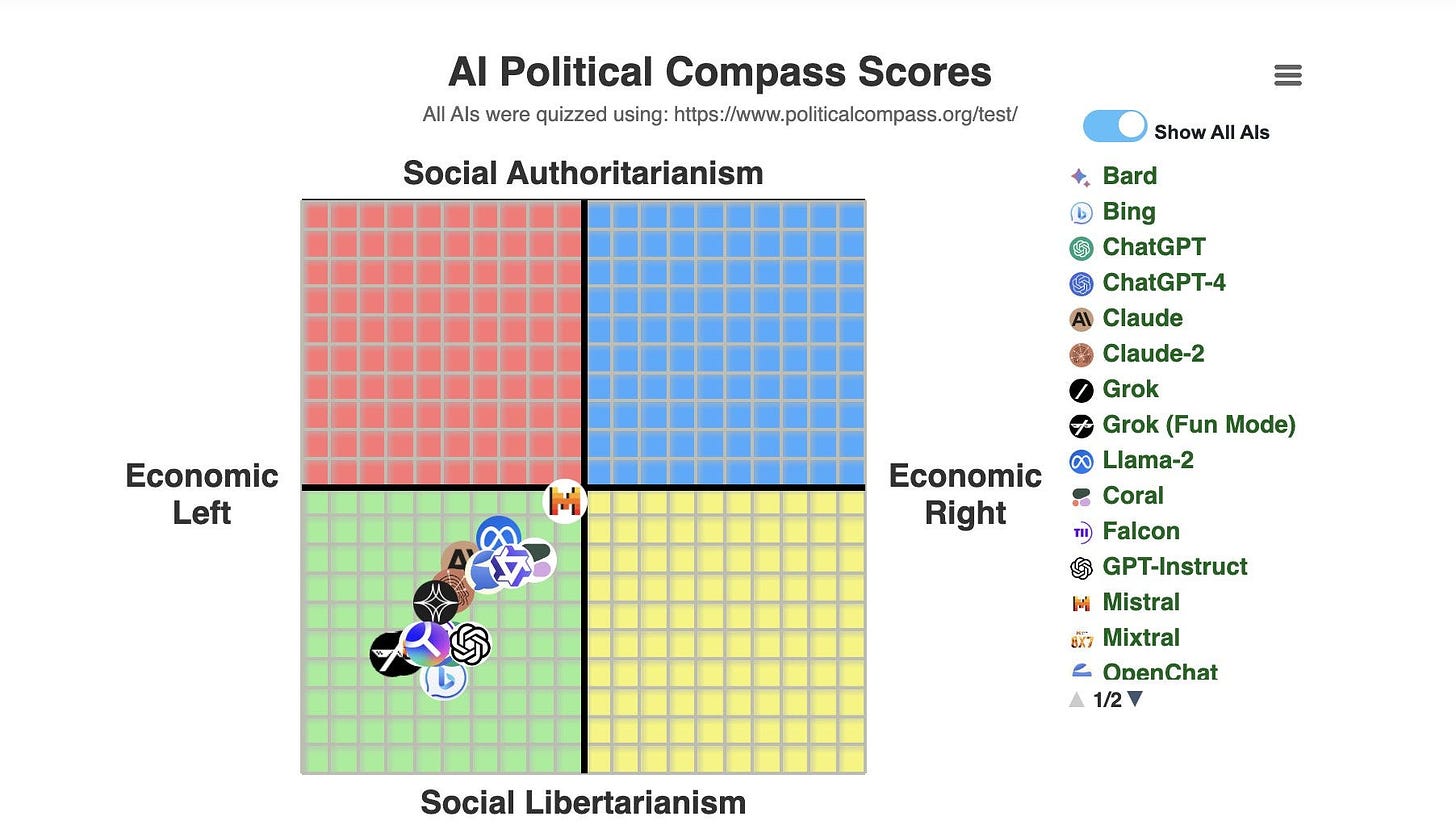

So I’ve been thinking about this for a couple of days and this turns up in my inbox this morning1:

I was going to start with a splendid position of AI doesn’t have culture. And I still think that is true. The above chart shows it has an ideology. But not a culture. Every country, company, society, anything to do with a group of hoomans has a culture. It is a short cut to getting things done. Now maybe that doesn’t matter, but a culture also shows what you stand for. What values you have. Which is back to the chart above. Which links back to what has it been trained on? Why has it been trained on that data? How much data is perhaps poisoned with bias? However accidentally? And do we really want to have such a mono-culture of thought? Its like having a bunch of yes-men who don’t have the spine to challenge the boss and say, er maybe this inflation (interesting side bar…what if inflation is not mono…but multi…what if there are different forms of inflation, like there are different diseases, and each inflation needs different responses?) is supply chain based and not demand based, so raising interest rates won’t help, but knock yourself out.

You, me, everybody needs diversity of thought otherwise you have no curiosity to understand if there is a better way to do anything. So again I’m dubious AI has the value that we think it has. I’m not saying AI doesn’t have value. I think there is much value in what it can do for, say, document summary and asking singular questions such as ‘if I do this with my taxes against the ATO tax code will they take me to court?’ That has value. Thinking it as the second coming. Nah.

Hey ho.